What is the TrustIndex?

There’s a moment just before a system transforms when you can feel the slope move under your feet.

At the start, the new tools look optional. The first-order effects have landed, but the second-order effects (the ones that change what it means to work, create, and believe) still feel like something we can debate. You can still imagine that we’ll argue it out, that a tidy policy layer will arrive, that institutions will adapt at roughly the same speed as the technology - or at least “soon enough”.

And then, quite suddenly, the debate stops mattering.

Not because we resolved it, and not because anyone (or anything) arrived in time. It stops mattering because the technology became useful enough, cheap enough, and embedded enough that it just didn’t matter anymore.

That is where we are with AI.

We’re entering a phase where the primary surface of the world (how you encounter information, opportunity, risk, and even your own attention) is increasingly mediated by systems you don’t fully understand (nobody does) - and you cannot easily leave. In a post-truth world (MC 900ft Jesus was right - truth really did go out of style) the honest posture isn’t certainty. It’s working out a way to “monitor what’s changing” so we can keep “updating our own forecasts” - our own view of what’s coming.

That’s what the TrustIndex is here to track. Not the hype cycle, not the product launches - but the slope, the pressure, and the shape of the interface layer forming between you and reality.

Where we are right now?

We’re already living inside this transition, but it just doesn’t feel evenly distributed.

Some people are using AI like a slightly smarter autocomplete. Others are quietly delegating half their working week to it. A smaller group is building workflows that assume a model is present (and they feel weirdly exposed without it). That unevenness matters, because it creates a false sense of time.

If you’re in the “optional” camp, the debate still feels alive. You can still imagine opting out. You can still pretend this is mostly about better chatbots, or a new flavour of productivity tool.

If you’re in the “embedded” camp, the debate already feels like theatre. The system is useful enough that it has started to route around hesitation. People aren’t waiting for permission - they’re just shipping.

And as the tools slide into the background, the real question shifts. It becomes less “is this accurate?” (a question you can sometimes test) and more “what am I trusting (without noticing)?”

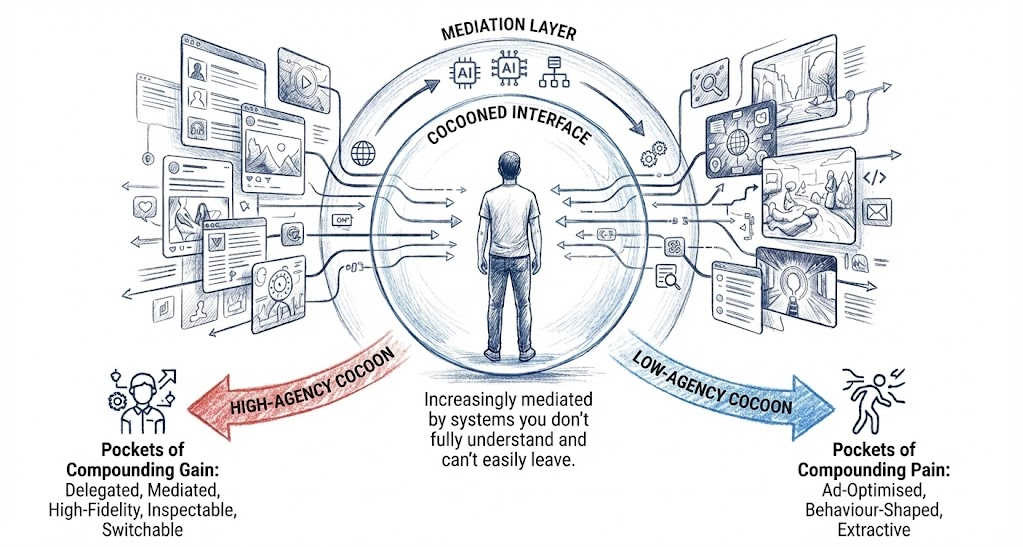

The Cocoon

If you follow the trajectory forward, a specific shape appears.

The Cocoon.

This is the shift from using apps to inhabiting an interface layer between you and the world. A lens that filters, annotates, summarises, recommends - and increasingly renders your reality.

We’ve already lived through the first version of this. Social media era gave us a feed-shaped reality (a world you scroll through). But the Cocoon era is what happens when that mediation becomes situated.

Two people can stand in the same room and inhabit different interfaces (different framing, different emphasis, different emotional colouring). The environment is shared - but the overlays are not. That sounds abstract until you notice how quickly this layer is thickening. More of your intake is summarised. More of your choices are pre-shaped. More of your attention is guided by systems whose incentives are not fully legible to you.

This creates a new social fault line.

There are high-agency Cocoons (systems that genuinely make you more capable, stable, and shielded).

And there are extractive Cocoons (free layers that are ad-optimised, behaviour-shaped, and optimised for someone else’s outcomes).

When the interface gets this thick, the attention economy doesn’t end. It just gets rerouted. Persuasion moves one level deeper (ambient, environmental, harder to spot, and harder to escape). For a growing chunk of the population raw-dogging reality may start to look like a status marker.

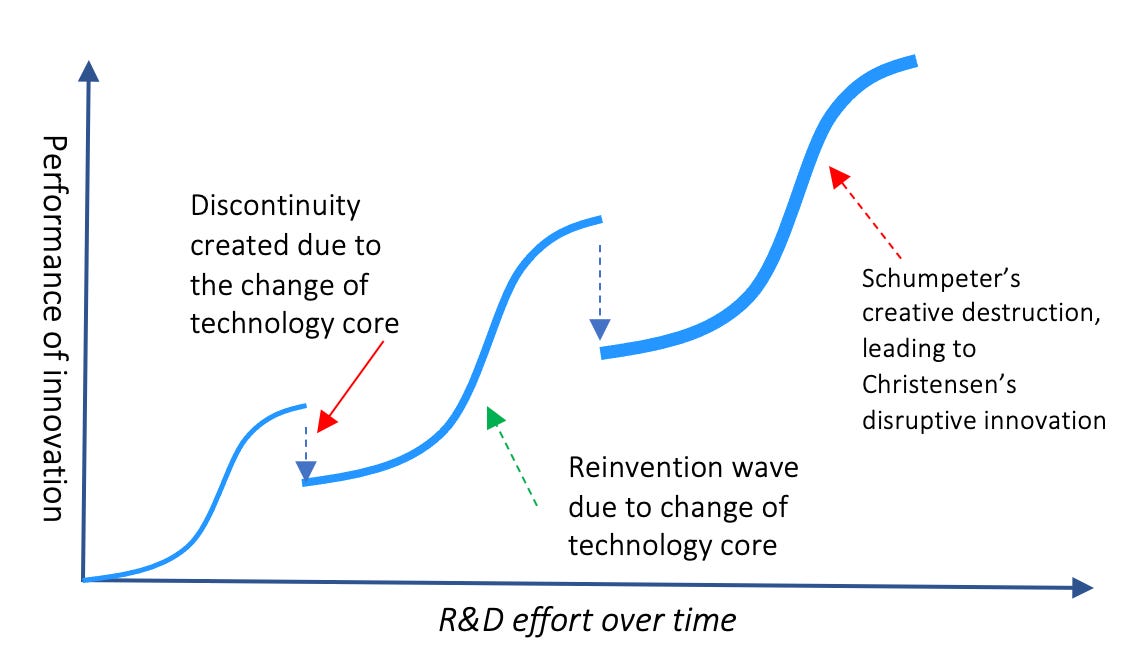

The next discontinuity isn’t ‘better models’

It’s tempting to frame this as a simple curve (models get better, the outputs get more impressive, the demos get weirder, and we all clap). But that isn’t the discontinuity.

The discontinuity is when the model stops behaving like a tool you consult, and starts behaving like a layer you inhabit. This is a geometric change in our relationship.

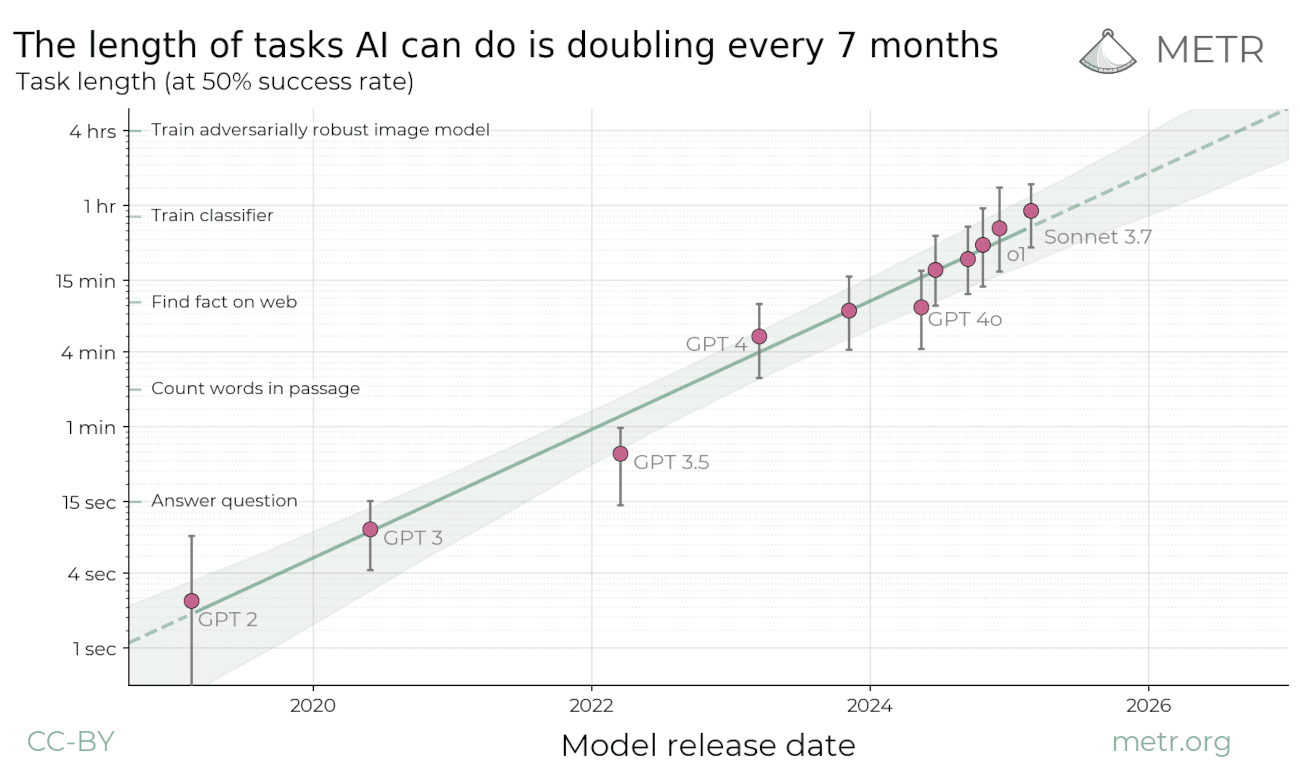

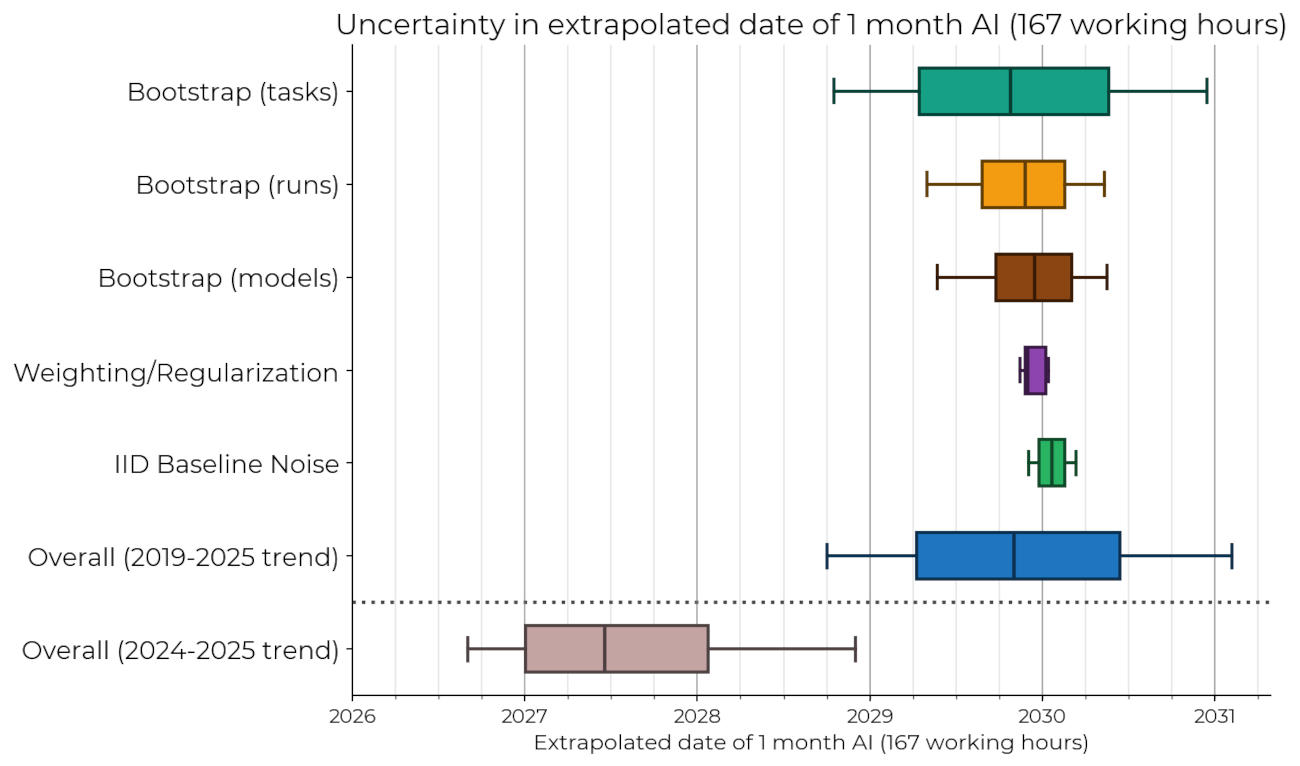

That shift has a few ingredients. One is autonomy (not “it can answer harder questions”, but “it can run longer without babysitting”). When systems can take a goal, hold context, use tools, recover from errors, and keep going, the interface stops being a conversation and becomes a delegated process.

Another is speed. Once teams (and increasingly individuals) can run fast loops (idea → experiment → interpret → deploy) software stops being something you ship occasionally and starts becoming something that evolves around you.

This is where the phrase “an ocean of software” starts to feel less like a metaphor and more like a description. We’re moving from “software as a landscape” (stable, mapped, predictable) to “software as an ocean” (fluid, deep and always moving). In that world, the concept of stability or reliability shifts. It stops being the product and becomes the reliability profile you internalise.

You don’t trust a system because it never changes. You trust it because you can predict how it changes. Software stops acting like a tool and starts acting like a person.

Verification collapses & optimisation moves upstream

In the old world, you could verify outputs. You could click through, audit the path, and get a feel for what you were shown (and what you weren’t shown). You could check page 2, 3 or even more of your search results.

But as the interface thickens, verification collapses. Not because we stop caring, but because there is simply too much. Too many summaries, too many generated views, too many micro-decisions delegated to systems that feel (to us) like a single smooth surface.

This is where optimisation moves. We already have SEO. Now we have GEO (Generative Engine Optimisation). In other words, we are learning (at speed) how to influence the layer that influences you.

That is not a conspiracy. It is an economic inevitability. The rewards and business models are just wired up that way. If the interface layer becomes the new terrain, then every actor with incentives will learn how to shape it. That shaping won’t always look like persuasion. Sometimes it will look like “helpfulness.” Sometimes it will look like “defaults.” Sometimes it will look like “what the model remembers about you.”

And in a mediated world, the most important question is often the one you can’t easily ask.

What did it not show you?

Media is becoming an interface to reality

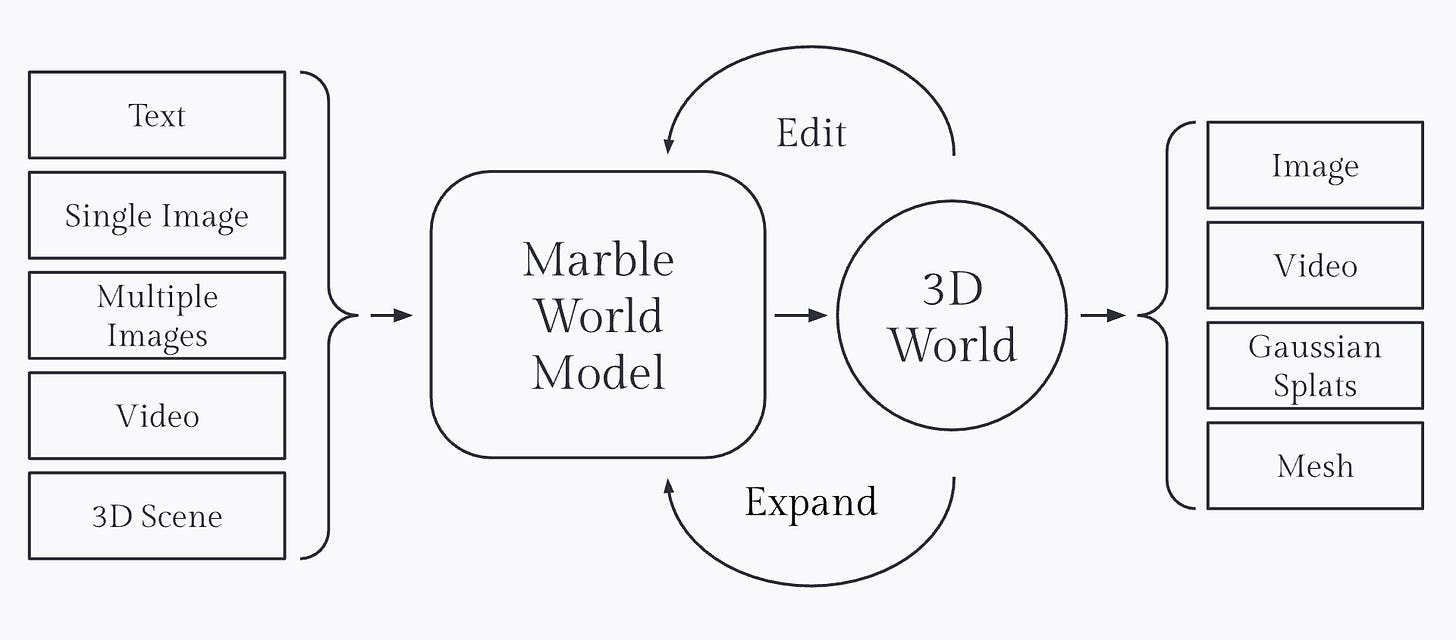

There’s another ingredient here that matters. The interface isn’t just summarising the world. It is starting to render it.

We’re watching synthetic media cross a threshold from novelty to infrastructure. Not done, not stable, but clearly on the path. World Generation is the extreme version of that path (not just content, but environments). You can read more about this trend in the first TrustIndex / Report).

Once your interface can produce convincing scenes (not just text) the Cocoon stops being metaphorical. It becomes literal.

When that happens, “truth” becomes a less useful organising concept. Not because truth is unimportant, but because it is too slow, too contested, and too easy to route around. Trust is different. Trust is a structural property. It is something you can measure. It’s something that’s required.

And in a world where the primary surface of experience is mediated, trust may become the only stable currency left.

Why trust?

So what stays stable when everything’s changing faster & faster?

Policies lag. Institutions fracture. Truth is contested.

Only trust remains. Not trust as a vibe. But Trust as Infrastructure (TaI).

Ideally this is a set of properties you can interrogate e.g.

Can you see why it made that decision (and what evidence it used)?

Can you leave (and take your context with you)? Is it working for you (or for the provider)?

Does it make you more capable (or just more dependent)?

Does it widen opportunity (or concentrate it)?

We probably won’t end up where we want to, but if we keep these ideals in mind at least we can map how and when we are drifting away from them.

The question isn’t “Will AI change the world?” The question is “What kind of interface layer we are building?” And of course, who does it serve?

It’s unlikely that we’ll get to opt out of this slippery slope. But we do get to decide whether we blindly slide - or whether we at least attempt to steer.

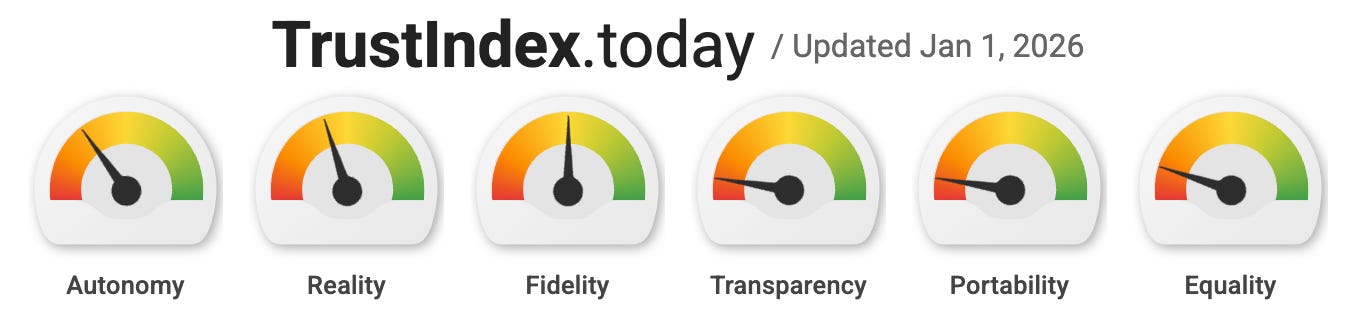

Introducing the TrustIndex dashboard

To make this transition legible, I’m launching the TrustIndex.

This isn’t a blog about product launches. It’s an instrument panel (built to track the reliability profile of the emerging interface layer). It does that using six dials:

Autonomy - How far systems run without babysitting

Reality - How thick the mediation layer is between you and the world

Fidelity - How convincing and coherent that layer feels over time

Transparency - Can you see why it did what it did

Portability - Can you leave and take your Cocoon with you

Equality - Who gets uplift and who gets squeezed

You can find the live, regularly updated dashboard at TrustIndex.today.

To keep the panel grounded (not hand waving vibes, hype or doom & gloom) this strategy is supported by three levels of intelligence.

Signals are real-time updates on specific features, papers, and releases that pressure the dials.

Briefings are the weekly synthesis. What those signals imply, and what’s starting to cluster into a trend.

Reports are the monthly consolidation. The baseline, the pressure, and what looks like it is steepening next. The Reports are a shareable asset and they’re designed to provide a consolidated view. They’re the monthly snapshot of where the slope is steepening (and where it isn’t). If you only read one thing a month, it should be the Report.

And if you want the deeper interpretability and geometry work behind these frames, that still lives in the Latent Geometry Lab. The TrustIndex is the bridge (technology to meaning, capability to culture, speed to stability).

If everything’s changing faster & faster, you need a way to measure the rate of change.

Welcome to the TrustIndex.

Join in as we track this slope and stay up-to-date. You can always find the current dashboard at TrustIndex.today and subscribe for regular updates as new Signals arrive, weekly Briefings are published and new Reports are released.