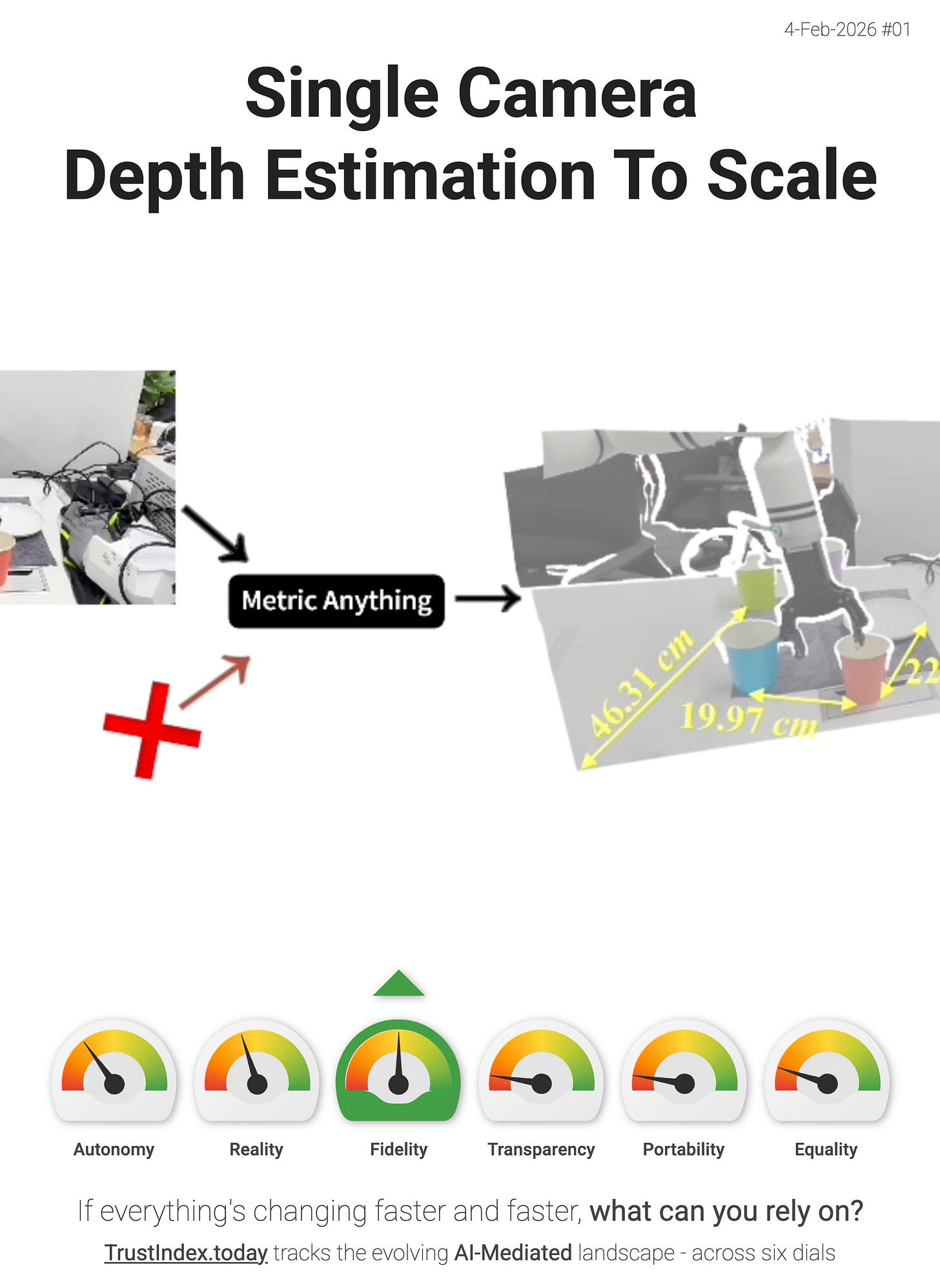

Single Camera Depth Estimation To Scale

Metric Anything is one of those Signals that sounds a bit niche - until you realise it’s a prerequisite for “everything” we keep calling “spatial computing”.

It’s a new approach that aims to produce “metric (to-scale) depth from a single camera”, and can also “recover camera intrinsics” and “use sparse depth as an input”. In other words - it’s pushing toward a world where “how far away is that?” or “how big is that?” become a default capability for commodity cameras. Not just something you only get from specialised sensors or carefully controlled setups.

Why this matters for TrustIndex - this puts upward pressure on the Fidelity dial.

High-fidelity AI-Mediation isn’t just prettier overlays - it’s “correct overlays” and AI-based “spatial reasoning”. If your system can infer real-world scale and geometry reliably, then:

- AR/UI layers can sit on the world instead of floating near it

- agents can reason about reach, clearance, placement, and navigation with fewer hallucinated distances

- “digital twins” become less like vibes and more like measurements

- the hand-off from perception → action gets tighter (robots, assistants, assistive tech, tools)

This is the quiet kind of progress that makes future interfaces feel inevitable. Once you can get metric depth from “just a camera”, you can ship spatial understanding everywhere (phones, glasses, laptops, drones) without waiting for the hardware stack to catch up.

“...its distilled prompt-free student achieves state-of-the-art results on monocular depth estimation, camera intrinsics recovery, single- and multi-view metric 3D reconstruction, and VLA planning. We also show that using the pretrained ViT of Metric Anything as a visual encoder significantly boosts Multimodal Large Language Model capabilities in spatial intelligence.” - Metric Anything

> The interface layer is thickening. If you disagree with my interpretation, or you’ve spotted a better signal then reply and tell me.