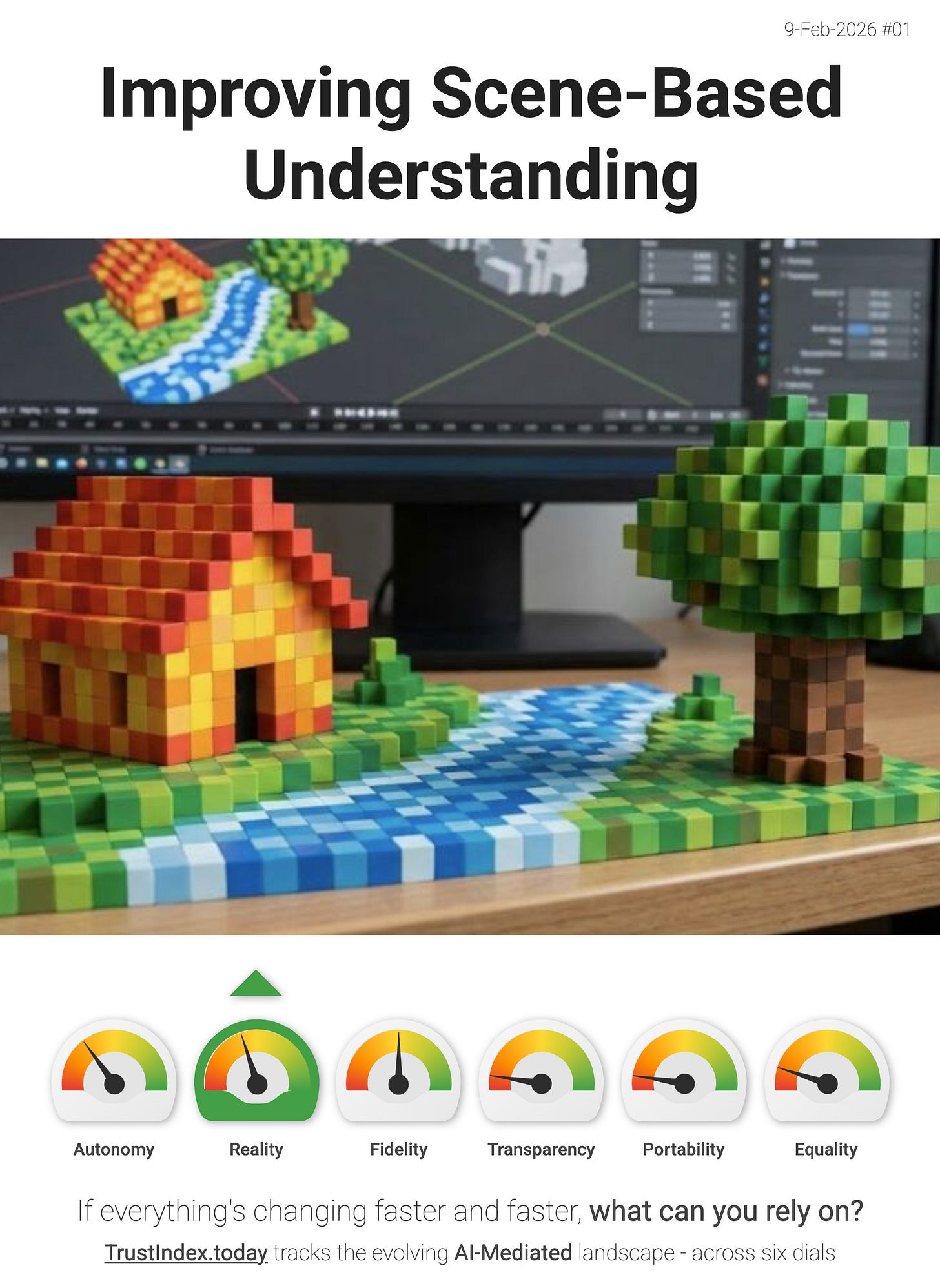

Improving Scene-Based Understanding

OpenVoxel is another step towards AI that doesn’t just see images - it understands scenes.

The core idea: a training-free method that can add voxel/3D-style scene structure to existing vision models, improving their ability to analyse and reason about what’s happening in a space (objects, layout, relationships) without needing to retrain the model from scratch.

That’s Reality dial pressure.

Because once scene understanding gets cheaper and more portable (something you can bolt onto today’s models) it becomes much easier for AI to sit between you and the world in real time:

- More of the world becomes legible. Not just “there’s a chair”, but “the chair is behind the table, blocking the path”, “the mug is near the edge”, “that surface is reachable”.

- Context becomes actionable. Better scene structure means better grounding for assistants that navigate, guide, and decide - in AR, robotics, accessibility, and everyday “what am I looking at?” use-cases.

- It scales without a big retraining tax. Training-free approaches spread fast, because they’re adoptable by anyone already shipping a vision model.

“...OpenVoxel successfully builds an informative scene map by captioning each group, enabling further 3D scene understanding tasks such as openvocabulary segmentation (OVS) or referring expression segmentation (RES).” - OpenVoxel

This isn’t a consumer feature on its own. But it’s exactly the kind of infrastructure upgrade that quietly turns into mainstream behaviour once it lands inside phones, glasses, browsers, and assistants.

> The interface layer is thickening. If you disagree with my interpretation, or you’ve spotted a better signal then reply and tell me.