Giving Sensors The Power Of Language

Archetype AI’s TimeFusion attempts to turn the physical world’s sensor output into something you can talk to, and even talk back to you and your agents.

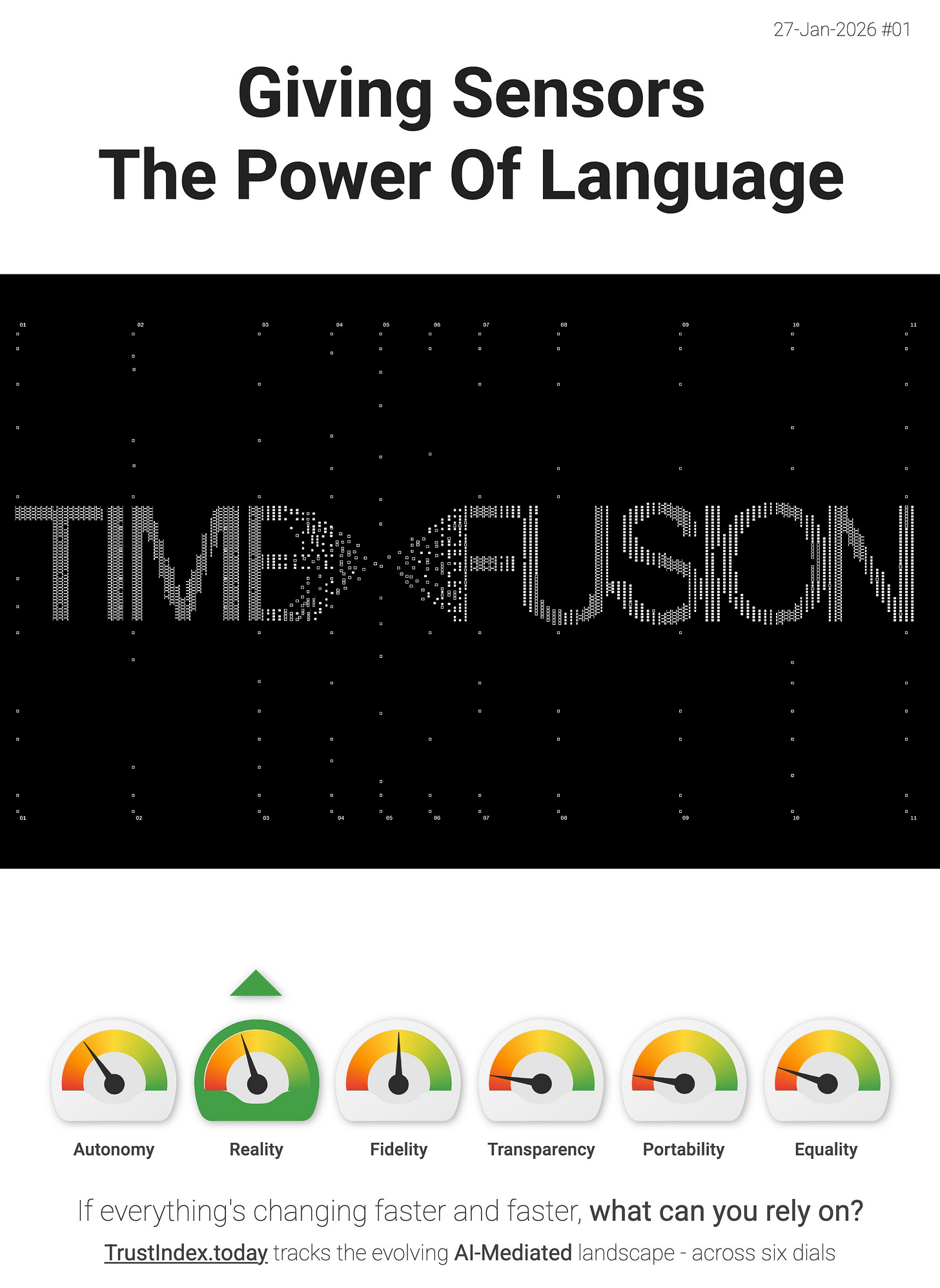

Archetype AI’s TimeFusion is a strong Reality dial signal. TimeFusion (Newton) is framed as a sensor–language fusion model that unifies human language + time-series sensor data using “Universal Tokens,” so you can have natural conversations with sensors and machines for insight, prediction, and control. That’s the interface thickening - more of the world around you becomes legible through language, which also makes it easier to route into LLM workflows.

The most “interface-y” detail - TimeFusion isn’t just sensor → text. Archetype says it can also do text → sensor, generating realistic time-series data from natural language prompts (and even combining examples + text prompts to fill gaps or create “what-if” simulations). In other words - language becomes a control surface for sensing, simulation, and actuation.

“What if anyone could have a natural conversation with any sensor or machine - and the sensor could respond? Explain what it’s seeing, predict what might happen next, detect and localize anomalies, or even generate new signals to control a robot or machinery inside an intelligent factory.” - Archetype AI

Not mainstream yet - but it’s a clear direction-of-travel signal. Here your Cocoon doesn’t just wrap screens and apps. It starts wrapping sensors, streams, and physical systems, with language as the universal interface.

> The interface layer is thickening. If you disagree with my interpretation, or you’ve spotted a better signal then reply and tell me.