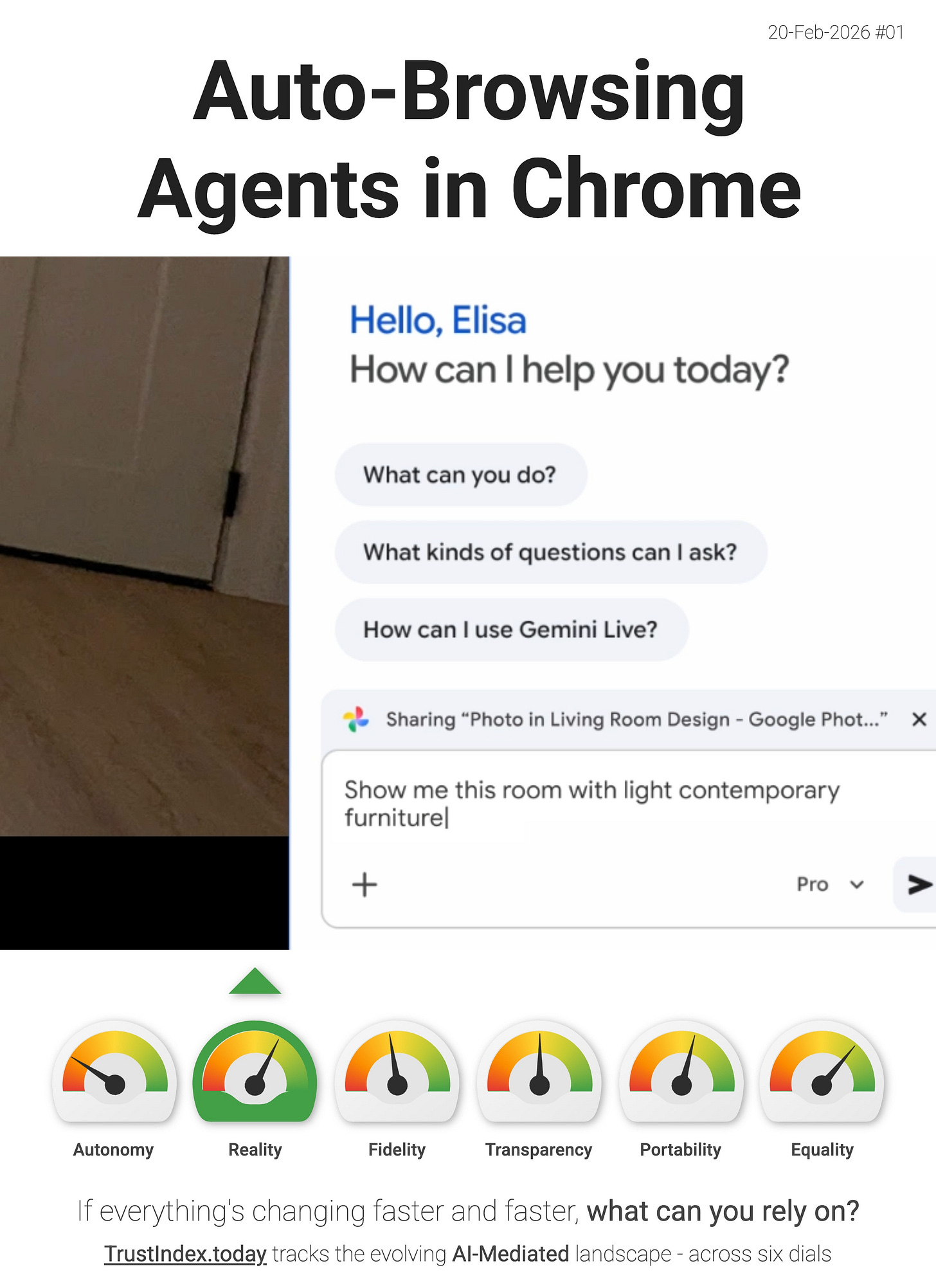

Auto-Browsing Agents in Chrome

Google has added a Gemini “auto-browse” capability in Chrome that can scroll, click and type to complete web tasks on a user’s behalf.

It’s positioned as browser-level tooling for autonomous task completion, rather than a separate app experience.

This puts upward pressure on the Reality dial as agents take on more of our daily tasks.

When the browser can execute steps end-to-end, the user spends less time verifying pages directly and more time accepting an agent’s interpretation of what it opened, what it ignored, and what it decided was “good enough” to act on. That shifts the locus of trust from the raw web to the model-mediated interface.

“From smarter assistance to agentic browsing, discover how the latest AI updates are making Chrome more helpful than ever.” - Google

As this pattern normalises, the “agent-as-interface” layer thickens - the model becomes the practical gateway to information and action, including which sources are surfaced and which are silently bypassed. In Reality terms, that concentrates power in the selection and sequencing logic, because the model isn’t just summarising the web - it is choosing and performing the path through it, with less user friction to notice mistakes.

> The interface layer is thickening. If you disagree with my interpretation, or you’ve spotted a better signal then reply and tell me.