Apple Bets $2B On The Evolution Of Silent-Speech

Apple has reportedly made a ~US$2B bet on “silent speech” - acquiring q.ai, who uses optical tracking of tiny facial micromovements to infer what you’re saying without audible speech.

This is upward pressure on the Reality dial.

Because silent-speech interfaces don’t just make input faster - they make the interface less visible. You don’t need to type. You don’t even need to speak. The interaction layer moves closer to intent, and the AI sits between your internal articulation and the external world as the default mediator.

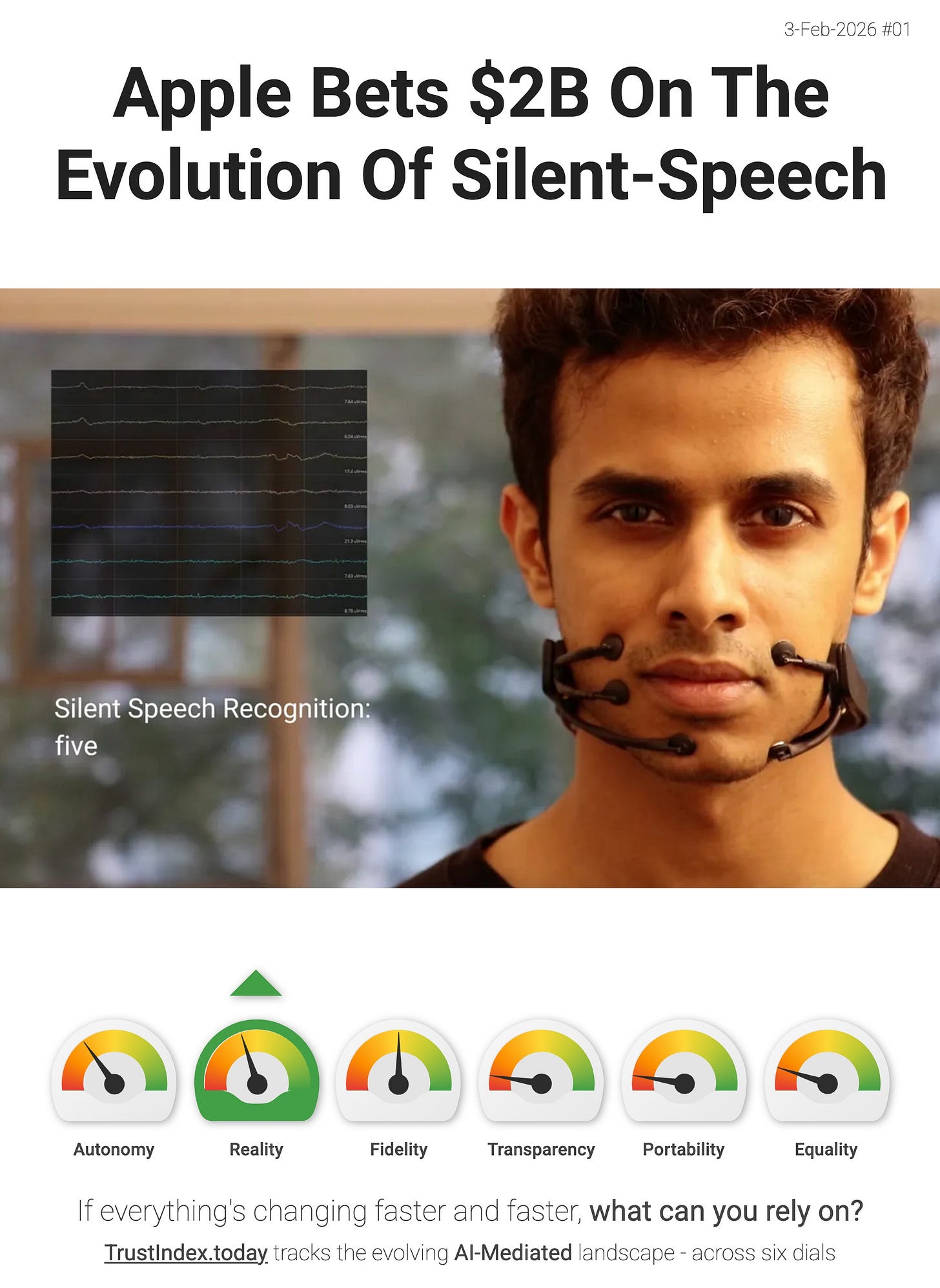

It’s also not a brand-new category. This acquisition builds on a line of research going back at least to MIT’s 2018 AlterEgo project - a wearable “silent speech interface” that captures neuromuscular signals associated with internal speech and turns them into a discreet, two-way interface (with private audio feedback - see image). The implementation details differ (q.ai’s optical approach vs AlterEgo’s EMG-style capture), but the direction is the same: communication becomes sub-vocal, ambient, and increasingly mediated by an assistant.

“Q.ai specializes in machine learning techniques that let devices interpret whispered or silent speech and enhance audio in noisy environments. The startup uses a mix of audio processing and imaging to read subtle facial expressions.”

- Entrepreneur.com

- AlterEgo

If this sticks, it thickens the interface in a particularly consequential way - the “default UI” isn’t a screen or voice command - it’s an always-available layer that can translate micro-intent into actions and messages, even when silence is required.

> The interface layer is thickening. If you disagree with my interpretation, or you’ve spotted a better signal then reply and tell me.