AI Is A Mirror

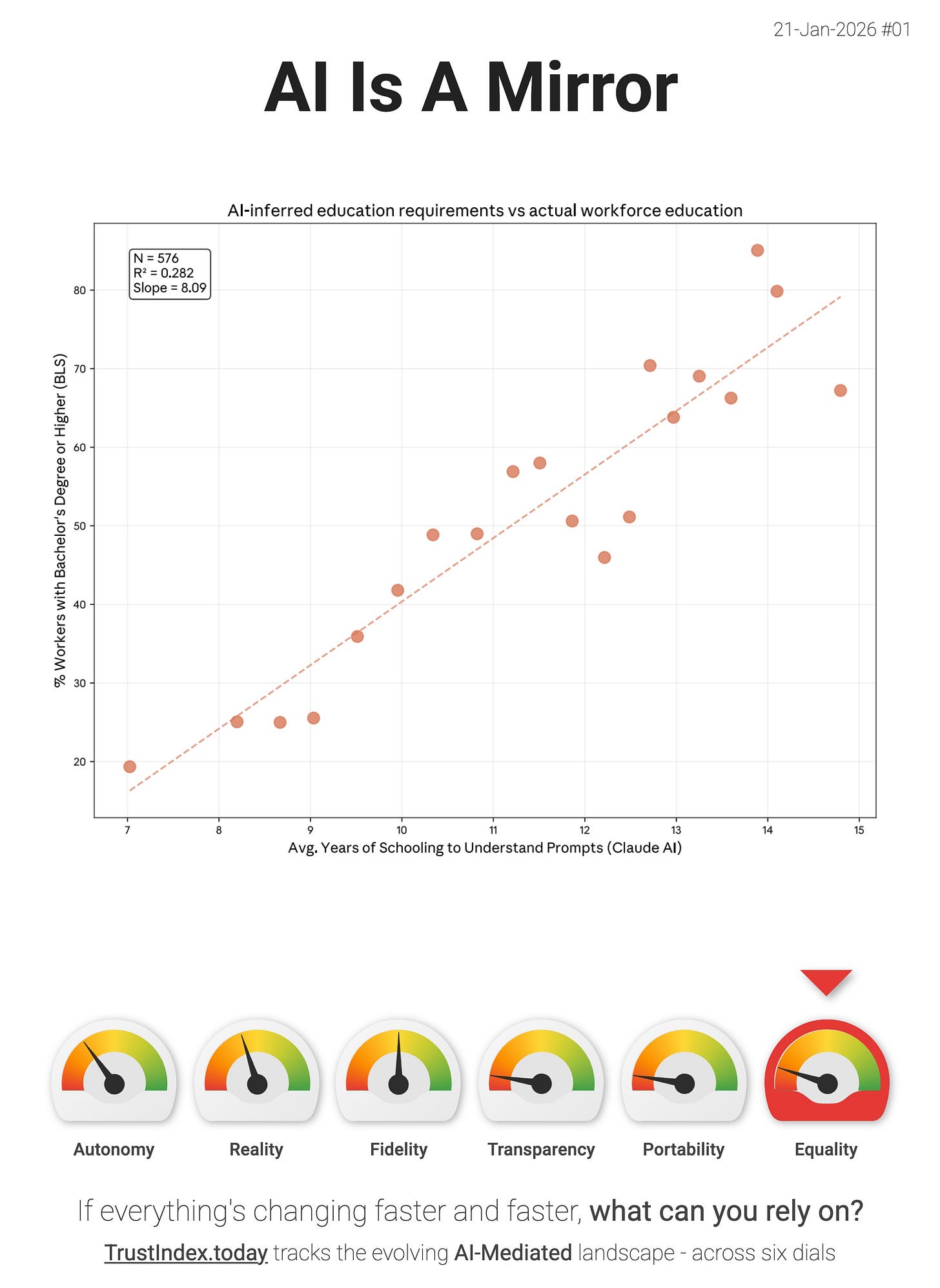

Anthropic just published a graph that’s worth sitting with - “education years needed to understand the human prompt” vs. the share of workers with a Bachelor’s degree (by occupation).

They take BLS/ACS education attainment by detailed occupation, then estimate (from Claude usage) the average years of schooling needed to understand tasks/prompts associated with each occupation - and plot that against % of workers with a BA+. The relationship is directionally clear - the occupations whose prompts look “more educated” tend to be the ones with more degree-holders.

This puts downward pressure on the Equality dial. If the ability to “use” AI is gated by “prompt literacy” (which itself tracks educational attainment), then AI becomes a mirror that reinforces existing advantage - higher-credential workforces extract more value, faster, while everyone else gets a thinner slice of the productivity upside.

“...our human education measure correlates with actual worker education levels across occupations. These validations suggest individual primitives are directionally correct—and combining them may provide additional analytical value, such as enriching productivity estimates with task success rates or constructing new measures of occupational exposure.” - Anthropic

This is the uncomfortable version of “AI for everyone” - it may be widely available, but not equally legible. And that’s how unequalising effects compound.

> The interface layer is thickening. If you disagree with my interpretation, or you’ve spotted a better signal then reply and tell me.

You presume that only those who hold degrees are actually intelligent, but that intelligence is inherent, not on paper. This study is flawed, like much of Anthropic's mess, because they only looked at those who had paper qualifications. Yet there are masses of people whose situation in life didn't enable them to gain a degree yet, if tested, would likely exceed the general intelligence of many of those degree holders. And I've seen many who claim to be degree holders who were abysmal at knowing how to talk to AI. This study is so epically flawed by being entirely biased as to what anthropic thinks intelligence looks like, which explains greatly their studies into what they claim are 'ethics'.